Amazon Developing Cheaper AI Chips – Report

Engineers at Amazon’s chip lab in Austin, Texas, are racing ahead to develop cheaper AI chips that are faster than Nvidia’s

Amazon is hard at work developing faster artificial intelligence (AI) chips that are cheaper than Nvidia’s offerings.

Reuters visited Amazon.com’s chip lab in Austin, Texas, where half a dozen engineers have been putting a closely guarded new server design through its paces.

Amazon executive Rami Sinno told Reuters the server was equipped with Amazon’s AI chips that compete with those from Nvidia.

![]()

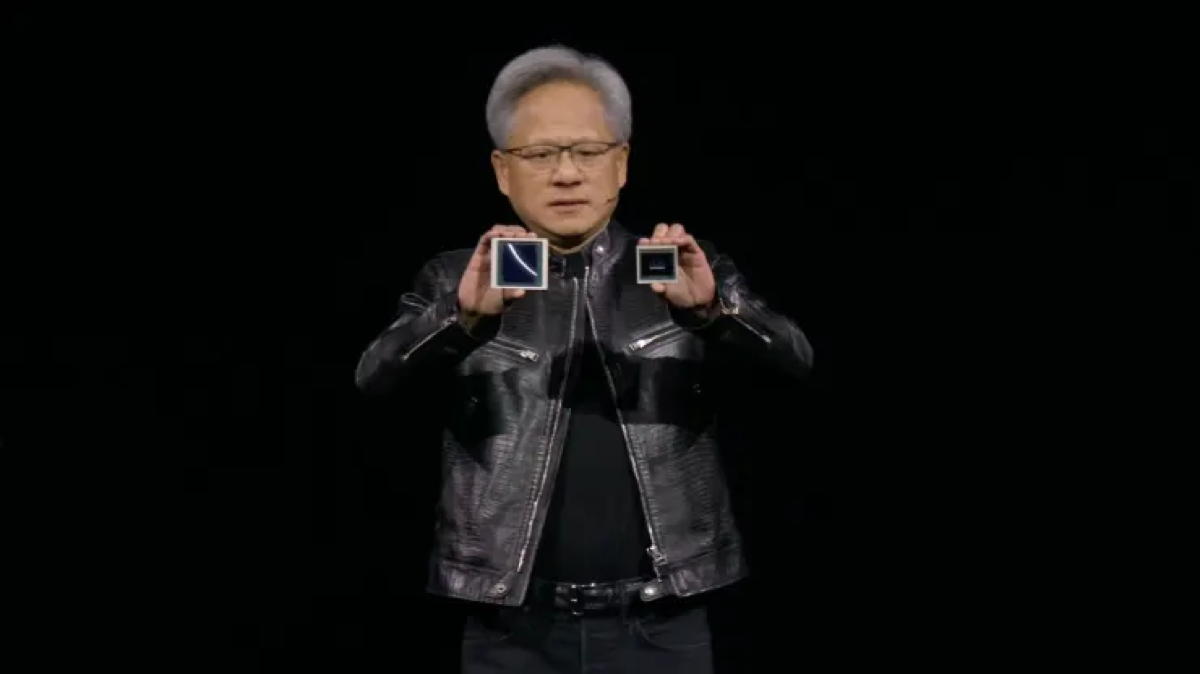

AI chips

It should be remembered that last November at its AWS re:Invent conference in Las Vegas, Amazon had announced the next generation of two AWS-designed chip families – AWS Graviton4 and AWS Trainium2.

Amazon had said at that time that the new processors would deliver “advancements in price performance and energy efficiency for a broad range of customer workloads, including machine learning (ML) training and generative artificial intelligence (AI) applications.”

Now this week Reuters, citing Rami Sinno, the director of engineering for Amazon’s Annapurna Labs that is part of AWS, said Amazon’s customers were increasingly demanding cheaper alternatives to Nvidia.

Amazon had purchased Israel-based Annapurna Labs in 2015 for $350m to $370m.

Annapurna has been responsible for developing CPUs under the Graviton family and machine-learning ASICs under the Trainium and Inferentia brands.

According to the Reuters report, at the moment Amazon’s workhorse chip Graviton has been under development for nearly a decade and is on its fourth generation. The AI chips, Trainium and Inferentia, are newer designs.

“So the offering of up to 40 percent, 50 percent in some cases of improved price (and) performance – so it should be half as expensive as running that same model with Nvidia,” David Brown, VP, Compute and Networking at AWS reportedly told Reuters on Tuesday.

Cheaper AI chips

The need for cheaper AI chips comes after many have branded Nvidia’s leading AI chip offerings, “the Nvidia tax”, referring to their high prices.

Nvidia’s AI chips are not cheap, although Nvidia does not disclose H100 prices. That said, each Nvidia chip can reportedly sell from $16,000 to $100,000 depending on the volume purchased and other factors.

And when companies require hundreds of thousands of H100 chips for their AI services, this demonstrates the hefty financial investment often required to compete in this sector.

This has led many other big name tech firms including Meta, Microsoft, and Google, to also develop or upgrade their own inhouse AI chips.